Operating Systerm Security

Teks penuh

Gambar

Garis besar

Dokumen terkait

Security monitoring system requires data transmission system fast receiving data and accurate at a certain distance, so that users can place devices freely at

This requires two basic steps: (a) translating existing course goals into applicable competencies from the list developed in Step 4 and (b) developing course assignments that will

•Instead of having a single CA as in the hierarchical trust model, the distributed trust model has multiple CAs that sign digital certificates. •The distributed trust model is

Django implements a Python class, django.db.models.Model , to define data models that will be used in a website. A data model is a set of definitions that define the attributes

If an incoming request uses an HTTP method that the matching RequestHandler doesn’t define (e.g., the request is POST but the handler class only defines a get method), Tornado

One can define corporate culture [15] as - A series of shared values and expectations - The interconnected sets of relatively persistent beliefs, values, expectations and goals that

Remarkably, we successfully demonstrated a high operating voltage of 3.0 V, and it can be clearly observed that our supercapacitor based on the PPy/AC composite electrode shows a

We discuss what it takes to build a cloud network, the evolution from the managed service pro- vider model to cloud computing and SaaS and from single-purpose archi- tectures to

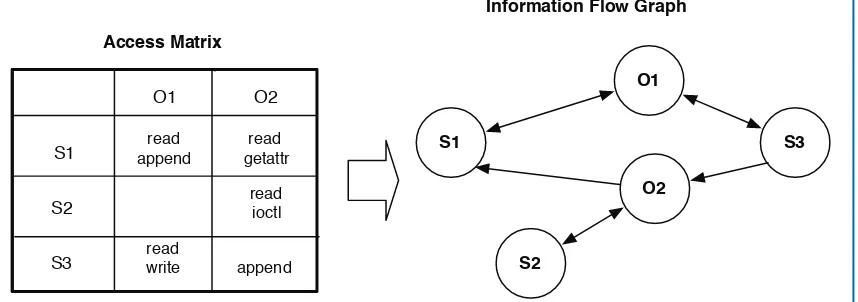

![Figure 3.1: Multics process’s segment addressing (adapted from [159]). Each process stores a referenceto its descriptor segment in its descriptor base register.The descriptor segment stores segment descriptor wordsthat reference each of the process’s active segments (e.g., segments 0, 1, and N).](https://thumb-ap.123doks.com/thumbv2/123dok/1140985.894063/44.612.136.556.129.451/addressing-referenceto-descriptor-descriptor-descriptor-descriptor-wordsthat-reference.webp)