THE INTRA-RATER RELIABILITY

CONSISTENCY OF ENGLISH TEACHERS AT

AL-AMIN ISLAMIC BOARDING SCHOOL

MOJOKERTO IN SCORING ESSAY TEST

THESIS

Submitted as Partial Fulfillment of the Requirements For The degree

of Sarjana Pendidikan (S.Pd) in Teaching English

By:

Siti Ghoniyya

D05212030

ENGLISH TEACHER EDUCATION DEPARTMENT

FACULTY OF EDUCATION AND TEACHER TRAINING

SUNAN AMPEL STATE ISLAMIC UNIVERSITY

SURABAYA

ABSTRACT

Ghoniyya, Siti. (2016). “The Intra-Rater Reliability Consistency of English Teachers at Al-Amin Islamic Boarding School Mojokerto in Scoring Essay Test”. A thesis. English Teacher Education Department, Faculty of Education and Teacher Training, Sunan Ampel State Islamic University, Surabaya. Advisor: Sigit Pramono Jati.

Key word: intra-rater reliability, consistency, scoring essay test

This study explores the English teachers’ consistency of intra-rater reliability in scoring essay test. By analyzing the teachers’ pre- and post-scoring in two-month interval quantitatively, this study showed that the intra-rater reliability of English raters at Al-Amin Islamic Boarding School Mojokerto in scoring essay test was consistent. It was proven by the result of Cronbach alpha coefficient in SPSS 23 as descriptive statistic analysis and paired t-test result as the inferential statistic analysis. Alpha coefficient of intraclass correlation mean showed 0.942 values. It can be said that the English teachers as the rater of essay test in this study had good reliability as the coefficient have to be above 0.7 in order to be admitted that raters were internally consistent. Also, paired t-test presented that t-test result was more than t-table (5%, N-1); 3.541 > 2.776, and Sig. = 0.02 was less than 0.05 as the significant level of this study. Those calculations had qualified the rules to reject the null hypothesis. In addition, it also explained that the result of descriptive statistic was the real result, not happened incidentally.

ABSTRAK

Ghoniyya, Siti. (2016). “The Intra-Rater Reliability Consistency of English Teachers at Al-Amin Islamic Boarding School Mojokerto in Scoring Essay Test”. Skripsi. Prodi Pendidikan Bahasa Inggris, Fakultas Tarbiyah dan Keguruan, Universitas Islam Negeri Sunan Ampel, Surabaya. Pembimbing: Sigit Pramono Jati.

Kata kunci: intra-rater reliability, consistency, scoring essay test

Penelitian ini meneliti tentang konsistensi dari reliabilitas diri (intra-rater) Guru Bahasa Inggris dalam menilai esai siswa. Dengan menganalisa secara kuantitatif dua skor (pre- dan post-) yang diberikan Guru Bahasa Inggris terhadap satu esai dengan jarak waktu penilaian selama dua bulan, penelitian ini menunjukkan bahwa reliabilitas diri Guru bahasa Inggris di Pondok Pesantren Al-Amin Mojokerto dalam menilai esai siswa konsisten. Hal ini terbukti dengan hasil dari koefisien Cronbach alpha pada SPSS 23 sebagai statistik deskriptif juga paired t-test sebagai analisis dari inferensial statisik. Koefisien Alpha dari korelasi intraclass hasil rata-rata skor menunjukkan angka 0.942. Ini bisa dikatakan bahwa Guru Bahasa Inggris sebagai pemberi nilai esai siswa memiliki reliabilitas yang sangat bagus, mengingat koefisien yang dihasilkan harus lebih dari 0.7 agar guru tersebut bisa dikatakan bahwa dirinya cukup konsisten dalam menilai esai siswa. Selain itu, analisis paired t-test juga menunjukkan bahwa nilai T melebihi nilai T tabel (5%, N-1); 3.541 > 2.776, dan Sig. = 0.02 kurang dari 0.05 yang merupakan level signifikansi dari penelitian ini. Perhitungan ini telah memenuhi persyaratan untuk menolak hipotesis nol. Ditambah lagi, hasil dari paired t-test juga menjelaskan bahwa hasil dari statistik deskriptif adalah hasil yang sesungguhnya, bukan karena suatu kebetulan.

x

TABLE OF CONTENT

TITLE SHEET ... i

APPROVAL SHEET ... ii

EXAMINERS APPROVAL SHEET ... iii

MOTTO ... iv

DEDICATION SHEET ... v

ABSTRACT ... vi

ACKNOWLEDGEMENT ... vii

PERNYATAAN KEASLIAN TULISAN ... ix

TABLE OF CONTENT ... x

LIST OF TABLES ... xiii

LIST OF DIAGRAMS ... xiv

LIST OF APPENDICES ... xv

CHAPTER I INTRODUCTION A.Background of The Study ... 1

B.Problem of The Study ... 6

C.Objective of The Study ... 6

D.Hypotesis of The Study ... 6

E.Significance of The Study ... 7

F. Scope and Limitation of The Study ... 7

xi

CHAPTER II REVIEW OF RELATED LITERATURE

A.Review of Previous Study ... 11

B.Theoritical Background ... 14

1. Understanding of Consistency ... 14

2. Understanding of Intra-rater Reliability ... 15

3. Understanding of Essay Text ... 19

4. Understanding of Scoring Essay Text ... 22

CHAPTER III RESEARCH METHOD A.Research Design ... 25

B.Subject of The Research ... 26

1. Population ... 26

2. Sample ... 27

C.Setting of the Research ... 27

1. Place... 27

2. Time ... 28

D.Data and Source of Data ... 28

E.Technique Data Collection and Research Instrument ... 29

F. Data Analysis Technique ... 30

G.Reliability and Validity ... 35

CHAPTER IV FINDINGS AND DISCUSSIONS A.Findings ... 37

1. Descriptive Statistics ... 37

2. Inferential Statistics ... 46

xii

CHAPTER V CONCLUSION AND SUGGESTION

A.Conclusion ... 57

B.Suggestion ... 58

1. For The Teachers ... 58

2. For The Future Reseachers ... 59

REFERENCES ... xvi

xiii

LIST OF TABLES

3.1Table of Reliability Interpretation ... 33 4.1Table of Raters’ Pre- and Post-Score of Five Same Essays ... 38 4.2Table of Cronbach Alpha Coefficient Result of Raters’ Intra-Rater

Reliability Consistency ... 41 4.3Table of Raters’ Reliability Interpretation Result ... 42 4.4Table of All Essays’ Average Results ... 44 4.5Output of Intraclass Correlation in Cronbach Alpha Coefficient of

xiv

LIST OF DIAGRAMS

xv

LIST OF APPENDICES

Appendix 1: Question Guide of Essay Test ... xviii

CHAPTER I

INTRODUCTION

This chapter presents the background of the study to explain some important

reasons why the researcher conducts a research about this topic. Then the problem is

formulated in research question with the objective of the study. The usefulness of the

research is mentioned in significance of the study to show how important the research

is. In addition, scope and limitation explain the constraint of the study that some parts

have to be ignored here. Also it is completed with definition of key term which gives

simple descriptions of some important key words of the research.

A. Background of the Study

Today, ESL teachers or tutors (English language program teachers)

more decide to use performance-based assessment in evaluating students’ language ability, such as oral production, writing production, open-ended

responses, integrated performance (across skills area), group performance and

other interactive tasks.1 It can be called as direct assessment since it provides

more direct evidence of meaningful application of knowledge and skill.

1

2

There are many kinds of writing test, such as paragraph construction

test, short-answer and sentence completion test, picture-cued test, essay test, etc.

This study will be focused on the essay test as the most appropriate test to

measure students’ critical thinking and conscious mental process. Essay test is one of writing production evaluations that requires the student to structure a rather

long written response up to several paragraphs. Their scoring requires expert

judgment rather than the application of a clerical key.2 This test is commonly

chosen to evaluate students’ writing ability since it has strengths, such as it can

test students’ complex learning writing outcome, posing a more realistic task and also requiring students to use their own writing skill.

While the fact that constructed-response essay items ask students to

produce samples of normative language which make such items to have more

valid measurement of communicative writing ability, the process of scoring essay

items is quite complex. In this part, human raters experience a new defiance to

defend the reliability and construct validity of test scores, as each rater has

different perceptions of performances and tendencies of leniency and severity. It

can cause high possibility of subjectivity as raters have their own personal criteria

of scoring.

2

3

The subjectivity in scoring a test can cause a doubt on the stable of

estimation. According to the explanation above, not only subjectivity but also

human error and bias during scoring process can deliver inconsistent grade of

each student. This inconsistency can decrease the rater reliability that can reduce

the assessment accuracy. In evaluating writing ability, rater reliability is difficult

to achieve since writing proficiency involves numerous traits that are hard to

define.3 It is the same as James Dean Brown statement that the very difficulty of

measuring mental traits explains why consistency is of particular concern to

language testers.4 It means that the consistency of scoring is very important to be

aware by all English teachers or testers since it determines the objectivity of the

“real” score that each students should be gotten.

The rater consistency in scoring essay test can be measured in two ways,

inter-rater and intra-rater reliability. Inter-rater reliability is estimated by looking

at the scores produced by two raters and calculating a correlation coefficient

between the two sets of score.5 Many foreign researchers had more chosen this

type to evaluate rater’s stability as the objectivity is more visible from comparing

3

H. Douglas Brown, Language Assessment …….. 21.

4

James Dean Brown, Testing in Language Program: A Comprehensive Guide to English Language Assessment (Singapore: The Mac Graw-Hill Companies, Inc., 2005), 169.

5

4

scores produced by two or more raters. In fact, English teachers in Indonesia are

not accustomed to teaching and assessing their students’ test in pair.

Intra-rater reliability is paid big attention here. It is a common

occurrence for a class teacher, like teachers in Indonesia, because unclear scoring

criteria, fatigue, bias toward particular “good” and “bad” students, or simple

carelessness.6 This type allows us to measure the individual rater by comparing

two scores produced by the same rater from the same essay in different time (pre-

and post-score). Unfortunately, Indonesian English teachers do not have big

attention and awareness in evaluating their consistency in scoring their students’

test, especially essay test as one of subjective test that the raters may have

subjective nature during scoring process. It is proven by the unavailable studies

which try to examine the rater consistency using this method.

The researcher has made a survey of theses in term of language testing

which are especially done by students of English Teacher Education Department

UIN Sunan Ampel Surabaya in their thesis. They mostly have concentration in

some assessment types, such paper project assessment, self assessment, formative

assessment, peer assessment and other assessments. The others, in minority, have

analyzed the validity of tests, like content validity, face validity, index difficulty

6

5

and item discrimination. However, there is a study about essay test which was

done by Ita Faradillah entitled “An Analysis of Essay Test on English Final Test

for Grade Eleven Students of SMAN 1 Lamongan” that only analyzed the content

validity, item discrimination and index difficulty of essay test.7 The researcher did

not find any studies about reliability, especially intra-rater reliability (internal

rater consistency) in scoring essay test which has taken a crucial part like the

explanation above. Therefore, the researcher wants to examine this topic

thoroughly.

Al-Amin Islamic Boarding School is the pioneer of bilingual Islamic

boarding school in Mojokerto. It means that this school becomes a model for

other new bilingual schools. As bilingual school, the students have to speak in

English or Arabic for their daily conversation. In addition, they get an additional

language class in the evening to deepen their language skills, one of them is

writing skill.

Absolutely language teachers take important part here. They have to

master the language very well in teaching and testing the students. Master of

language here means that English teacher must have a college degree and national

certificate in language. It can be said that they are professional enough to be good

7

6

teacher admitted by a college that formed in certificate and have good quality of

validity and reliability to be a language tester. As the limitation of the language

teachers, a teacher is in charge only for a class. They teach, test and grade their

students by them selves, especially in examining students’ writing skill, such as an essay test. Therefore, it is very important to know whether the English

teachers’ intra-rater reliability is consistent or not in scoring essay test to

understand how objective they are in assessing subjective test.

B. Problem of the Study

Based on the background of the problem, the research question of study can be formed as follows:

Is the intra-rater reliability of English teachers at Al-Amin Islamic Boarding

School Mojokerto in scoring essay test consistent?

C. Objective of the Study

In this study, the researcher wants to know whether the intra-rater reliability of English teachers was consistent or not in scoring essay test.

D. Hypothesis of the Study

7

E. Significance of the Study

The finding of this study is expected to gain awareness of English teachers

about the important of intra-rater reliability consistency in writing assessment,

especially essay test. They need to know whether their-self were consistent or not

in evaluating their students’ writing skill. This awareness will make them become

more objective in assessing subjective test. Therefore, English teachers will be

more careful in scoring essay test in order that the students get their “real” score

and feedback properly.

F. Scope and Limitation of the Study

The scope of this study is to determine whether the intra-rater of English

teachers in scoring daily essay test of grade eleven Al Amin Islamic Boarding

Senior High School Mojokerto consistent or not. This research will not examine

their reliability by comparing each teachers’ score (inter-rater reliability) or any

other kinds of reliability. Therefore, it will be conducted only in measuring the

English teachers’ level of intra-rater reliability.

The second limitation of this research is that the researcher conducted the

research only once. Once means the research only analyzed their consistency

8

of this assessment, it has possibility that the result will be changed in different

time.

G. Definition of Key Terms

1. Consistency

According to Oxford Dictionary, Consistency is the quality of being

consistent. Consistent means behaving the same way, having the same

opinions, standards, etc.8In short, consistency is the quality of having the

same opinion of something.

In this study, consistency here means the investigation of English

language teachers’ consistent quality in scoring essay test by obtaining their

intra-rater reliability level in statistical analysis of pre- and post-scoring.

2. Intra-rater Reliability

Intra-rater is one of kind of rater reliability which is typically

estimated by getting two sets of scores produced by the same raters for the

same group of students (say a rater scores one groups’ set of compositions on

two successive occasions about two weeks apart), and calculating a

correlation coefficient between those two sets of scores.9

8“Consistency” and “Consistent”, Oxford Dictionary

, 4th Ed. (UK: Oxford University Press, 2008), 91.

9

9

Intra-rater reliability in this study is to determine English teachers’ self-consistency in scoring essay test. Cronbach alpha coefficient is the most

appropriate formula to establish this reliability. The result of average has to be

above 0.7 in order to be considered that they are internally consistent and

reliable. In addition, there will be reliability level for detail information of

each rater result.

3. Intra-rater Reliability Consistency

In this research, the intra-rater reliability consistency means the

English teachers’ self-consistency as raters of essay test. Their own score

product in pre- and post-scoring will be examined to decide whether they are

consistent or not. As this study investigates about the consistency of intra-rater

reliability, the calculation uses intraclass reliability estimation in SPSS 23 to

analyze scores produced by raters.

4. Scoring Essay Test

The definition of scoring itself is gaining marks in a test or exams.10

Essay test is one or more essay questions administered to a group of students

under standard conditions for the primary purpose of collecting evaluation

data.11In this research, scoring essay test means grading students’ essay as

10“Score”, Oxford Dictionary

, 4thEd. ….. 393.

11

10

their writing daily evaluation. The kind of essay test used in this study is

exposition essay about the newest issue. This essay is chosen as the English

CHAPTER II

REVIEW OF RELATED LITERATURE

This chapter provides some theories of literature related to discussion of the study. Also, it presents review of previous study to show the differences between this research and other previous researches which is done by other researchers.

A. Review of Previous Study

Before doing this study, the researcher has read some previous studies focused on the same topic as it does. The first previous study is the thesis from Ita Faradillah entitled An Analysis of Essay Test on English Final Test for Grade Eleven Students of SMAN 1 Lamongan1. This study investigate the content validity, index difficulty and item discrimination of essay test tested in two different classes, XIA5 and XIA6. The result showed that the essay test has good content validity which is proven by 80% of concurrence. It means that most of test items represent all material taught in grade eleven although there are some items which are out of the box. Then, the index difficulty and item discrimination of essay test produced different result in those classes. It was acceptable only in XIA5 but it was rejected in XIA6 as the result is around 0,1-1,0 for index difficulty and 00-0,19 for index discrimination which means that the items were

1

12

too easy and too difficult. Therefore, the essay test should be revised for XIA6

because it could not discriminate students’ achievement properly.

Unfortunately, this study did not measure the level of raters’ consistency in scoring essay test. Moreover, a good test must complete the requirement of validity and reliability. This study only investigates the validity not reliability. So that in this study the researcher wants to examine the reliability, look at from

raters’ consistency, to complete this previous study.

Second, Classroom Writing Teacher’s Intra- and Inter-rater Reliability: Does It Matter by Viphavee Vongpumivitch2 from National Tsing Hua University investigated the reliability of each scale in an analytic scale of scoring essay test

and the teachers’ consistency in scoring essay test using the scale. The result

showed that the teachers have very low intra- and inter-rater reliability; each scale of the analytical rating scale has low correlation, especially in content and

organization; and each teacher has different understanding of the scale’s criteria

based on their experience, personalities, and personal agendas. This study had measured the inter- and intra-reliability of each rater thoroughly in using rubrics

(scale’s criteria) for assessing writing.

2

13

The next is Rater Discrepancy in the Spanish University Entrance Examination by Marian Amengual Pirazzo3 from University of Balearic Island. This study told that there are no significant differences between the holistic pre- and post-scores but there are important differences in the behavior of raters in consistency of scoring. In short, the intra-rater reliability is quite high despite some exceptions such as their condition in scoring, etc.

The last two studies have examined the intra-rater reliability of scorer in scoring essay test. It has measured the raters who use both holistic and authentic assessment. As this is the first research of intra-rater reliability in Indonesia, especially in English Education Department of UIN Sunan Ampel Surabaya, so that it will measure the intra-rater reliability in general. The researcher makes it special as the subject of this research is the English teachers of Al-Amin Islamic Boarding Senior High School Mojokerto where the English learning focuses on

the students’ language skills, especially in writing.

Another previous study is Reliability and Validity of Rubrics for Assessment through Writing by Ali Reza Rezaei from California State University and Michael Lovorn from The University of Alabama, USA.4 This study intended

to investigate the reliability and validity of rubrics in the assessment of students’

writing prompt. The results showed that rubrics may not improve the reliability

3

Marian Amengual Pirazzo. Rater Discrepancy in the Spanish University Entrance Examination. Journal of English Studies University of Balearic Island Vol.4 page 23-26, 2003-2004.

4

14

and validity of assessment if raters were not well trained on how to use and

implement the rubrics. This result rejected the writers’ hypotheses that the rubric will improve the raters’ reliability and validity as it was more analytic. It was

proven as the raters were more influenced by mechanical characteristic than the content even they used a rubric.

Even this study uses a rubric for scoring essay test but the focus was not

the rubric’s impact in the raters’ assessment. This research only focuses on the

raters’ consistency of intra-rater reliability in scoring essay test based on all

categories in rubric, such as Content, Organization, Grammar, Vocabulary and Mechanic.

B. Theoretical Background

1. Understanding of Consistency

Based on some dictionaries, consistency is the ability to remain the same in behavior, attitude or qualities. It means that consistency identifies how stable someone in doing something. In this case, consistency analysis of English language teachers in scoring essay test means measuring the quality

of human raters; English language teachers, in rating their students’ essay test.

15

interpreted as the percent of systematic, or consistent, or reliable variance in the scores on a test.5

According to James Dean Brown, reliability coefficient is different from a correlation coefficient in that it can only go as low as 0.00 because a test cannot logically have less than zero reliability.6 Therefore if there is negative for the reliability of the test, he suggests checking for errors whether the researchers make mistakes in their calculation. In addition if the calculation is right, they should round the negative result to 0.00 and admit the results on the test have zero reliability which means totally unreliable or inconsistent.

2. Understanding of Intra-rater Reliability

One of ways to measure the quality of good test is reliability. To be reliable, a test must be consistent in its measurement. In other words, a test score has to be free of measurement error.7 It is like when the teacher gives the same test to the same students on two different occasions, the test should produce the same result too. If it is different, it has many possibility factors; such as it comes from the students, the examiner or rater, the condition when

5

James Dean Brown, Testing in Language Program….. 175.

6

Ibid., 175.

7

16

the test happen (test administration) or the test itself (the length of the questions, the paper used, etc).

The student-related reliability is some factors that may affect reliability that come from the audience of the test, mean the students. It is caused by temporary illness, “bad day”, anxiety, and other internal physical and psychological factors of students.8 Whereas the test administration reliability is the reliability factors that include the condition during testing process, such as the noise street so the student who sit beside the window cannot hear the tape recorder clearly in listening test, photocopying problems, the amount of light in different part of the room, temperature problems or the condition of desks and chairs. Then another factor that can affect the reliability comes from the test itself. It can be caused from the length of the test is not balance with the time longer.

The last factors that may influence reliability come from examiner or rater of the test. There are two kinds of rater reliability. First is inter-rater reliability, when there are two or more scorers examine the same test. If they produce different score, it means the test has low-reliability or unreliability. Second is intra-rater reliability, it is not about two or more scorers but only one scorer examine the test individually. This type has bigger possibility of subjectivity because of unclear scoring criteria, fatigue, bias, simple carelessness or sympathy to students.

8

17

Rater reliability, especially the intra-, is one of repeated measurement reliability form that conceptualized in quantitative research. It has to do with the ability to measure the same thing in different time, called test-retest method. By using this method, this study wants to investigate how strong the relationship is between the scores at the two time points, in this case is consistency. The intra-rater reliability coefficient can be resulted from the average or the added up of two sets of scores in the decision making process. Cronbach Alpha is the most appropriate form to calculate this coefficient. It is the easiest formula in split-half reliability method. The result will be formed in decimal and it will show the reliability level of each teachers. In addition, it will help to give the final result whether the intra-rater reliability of English teachers at Al-Amin Islamic Boarding School Mojokerto consistent or not in scoring essay test.

One important thing that follows when measuring intra-rater reliability is how much time needed to let go by before post-scoring. This is very difficult to answer as every research about this topic has different time interval. For example, the journal entitled Writing Teacher’s Intra- and Inter-rater Reliability: Does It Matter by Viphavee Vongpumivitch9 from National Tsing Hua University had one week interval between pre- and post-training stage whereas another study entitled Rater Discrepancy in the Spanish

9

18

University Entrance Examination by Marian Amengual Pirazzo10 from University of Balearic Island gave the distance for pre- and post-scoring in three months interval. If the time interval was too short, the raters may remember how they scored last time and simply give the same score because

of this. In contrary, the raters’ opinion may be genuinely changed. It is called

carryover effect and can lead overestimating the reliability of the test.11 One to two weeks is often recommended as an optimal time, though the risk of some carryover effect remains.12 To reduce and avoid the risk of carryover, this study used two months interval as it was not too short like one week and too long like three months.

Unreliability or inconsistency is clearly a problem. Inconsistency rater will lead to unreliable test that can influence the score produced. The score may be impacted by many factors which indicate the unreal grade, means it

does not represent students’ real condition. Therefore students will not get the

appropriate feedback and mark based on their true ability.

This research only focuses on intra-rater reliability of the test rater. It is the most suitable reliability that should be researched as the condition of English teachers in Indonesia who teach the class individually. Therefore, the researcher wants to know the consistency of English teachers in scoring essay

10

Marian Amengual Pirazzo. Rater Discrepancy in the Spanish University Entrance Examination. Journal of English Studies University of Balearic Island Vol.4 page 23-26, 2003-2004.

11

Daniel Mujis. Doing Quantitative Research…. 72. 12

19

test as subjective test in the grade eleven of Al-Amin Islamic Boarding Senior High School Mojokerto.

3. Understanding of Essay Test

According to Coffman, essay test is one or more questions administered to a group of students under standard conditions for the primary purpose of collecting evaluation.13 Essay items are useful when teachers are interested in learning how students arrive at an answer as they do not ask students to choose one of responses like objective test but to share their ideas by their own word. In this test type, students decide how to approach the problem, how to set it up, what factual information or opinion to use, and how to specifically express their answer.

Based on Stalnaker’s definition, an essay test should meet the

following criteria:14

- Requires examinee to compose rather than select their response. - Elicits students’ responses that must consist of more than one sentence. - Allows different or original responses or pattern of responses.

- Requires subjective judgment by a competent specialist to judge the accuracy and quality of responses.

13

W. E. Coffman, Essay Examination.….. 271. 14

20

Essay test is the most appropriate part to measure students’ cognitive skill because it explores students’ critical thinking and conscious mental

process. Age is the influence for human cognition, it develops rapidly throughout the firs sixteen years of life and less rapidly thereafter.15 Therefore this research concerns on grade of eleven, as the participant of the essay test that taken the score, which the average of students in the class are sixteen to seventeen years old. In addition, the essay material is focused on the grade eleven so that the researcher is sure that all students have gotten the material well and they will give their best in doing this test.

Nowadays, most of English teachers have increasingly turned away to this essay test. They have some motives why they choose it than multiple-choice (MC) test. Moreover, the assessment of MC is easier than essay as it is one of kind of objective test. The reasons are:16

- Assess students’ higher-order or critical thinking skill, means that this test can test complex learning outcomes that cannot effectively assessed by other assessment procedures.

- Evaluate students thinking and reasoning, means that this test can examine thought processes from how the students select, organize, and evaluate facts, ideas, etc.

15

H. Douglas Brown, Principles of Language Learning and Teaching Fourth Edition (San Francisco State University: Longman Inc., 2000), 61.

16

21

- Provide authentic experience, means that this test can assess the students’ ability to construct solution and decision.

- Require students use own writing skill;17 the students can select their own words, sentences and paragraphs or organize correct grammar and spelling.

Besides the strength, there are many weaknesses which are contained in the essay test such as the lack of validity and reliability, the unpredictable result, difficult to assess and the longer time needed to examine. The main problem of this test is seemed from the examiner reliability. The testers get many difficulties in deciding the score of each essay test. Even there is a rubric which contains scale criteria but there is still subjectivity during scoring process. In addition, English teachers in Indonesia are never be aware of their objectivity in scoring essay test as subjective test. They score the test individually without caring whether their score will be stable or not when they try to score in different occasion. Therefore, there is a big possibility that their

assessment of each essay test will change based on the raters’ internal or

external situation when they score the essay (low intra-rater reliability).

There are two types of essay test, extended and restricted response question.18 It is distinguished from the choice of the content and the form. Extended allows students to decide the content and the format freely. While

17

William E. Chasin. Idea Paper No.17: Improving….1.

18

22

the restricted limits students in choosing both of them. Most writers agree that this type is the most appropriate form when the teachers wish to test content. This study uses restricted response question in form of exposition essay as this essay test is one of daily examination which is held to know students’ achievement in a specific material based on curriculum. Therefore, the teachers can examine each students writing ability clearly.

4. Understanding of Scoring Essay Test

As essay test is a subjective test that has subjective nature and complex judgment, this assessment has gotten big attention, especially in human raters. Even if there are many applications offer automated scoring of essay test but human still have a big part in this assessment as they can understand both the content and the quality of writing. Some of the strengths of scoring by human rater are that they can (a) cognitively process the information given in a text, (b) connect it with their prior knowledge, (c) be based on their understanding of the content, make a judgment on the quality of the text, and (d) be able to recognize and appreciate students’ creativity and style.19

Beside all the strengths, human scoring has limitation. Some of their weaknesses are needed good human rater quality and instructed in how to use

19

23

scoring rubric so that they must be controlled continuously.20 In addition, they can make mistakes based on the cognitive limitation which is difficult to quantify and cause systematic bias of the score.21 This bias can make the validity and reliability of the essay test automatically low. Therefore, it is very important to check them immediately. As there was study about examining the content validity, the index difficulty and item discrimination about essay test so that the researcher will examine another part of essay test. This study will measure the reliability of the essay test observed from the individual grader of the test, intra-rater reliability.

There are two tools that can be chosen by testers in scoring essay test, holistic (global) and analytic (point-score) assessment. Its evaluation and description become the main difference between those tools. The analytic allows for separate evaluation of factors to be evaluated (e.g., persuasive argument and grammar in writing) and the description of what is expected at each score level is provided. In short, the analytical assessment is

characterized by a specific scale’s criteria which decide how much of each

maximum subtotal judge the students’ answer to have earned.22

I. I. Bejar, (2011). A validity-based approach to quality control and assurance of automated scoring. Assessment in Education: Principles, Policy & Practice, 18(3), 319

22

24

criteria set for evaluation of different factors. In addition, it supports broader judgments concerning the quality of the process or the product. Simply, this tool is indicated by a whole evaluation which makes an overall judgment about how successfully the students have covered everything that was expected in the answer and assigns the paper to a grade.

CHAPTER III

RESEARCH METHOD

This chapter shows the procedure of conducting the research. It covers research design, population and sample of the research, setting of the research that contain time and place of the study, data and source of data, technique data collection and research instrument, also data analysis technique.

A. Research Design

The research design is the overall plan or structure used to answer the research question.1 The design is used as a guide for a researcher to collect and to analyze the data. In conducting this research, it was used Quantitative design the numerical data needed to measure the English teacher’s intra-rater reliability and analyzed it mathematically.

“Quantitative research is explaining phenomena by collecting numerical data that are analyzed using mathematically based methods (in particular statistics).”2

Statistics is a special mathematically method used to analyze data in this design. Statistical procedures were used in this study is Descriptive Statistics that

1

Phyllis Tharenou, et.al., Ross Donohue, Brian Cooper. Management Research Methods (New York: Cambridge University Press, 2007), 16.

2

26

helps the researcher to organize, summarize and describe observations.3 It means

that after collecting the data of English teachers’ pre- and post- essay test scoring

in numerical, the researcher analyzed statistically then interpreted and concluded it descriptively. In addition, Inferential Statistic was also used in this study to generalize findings to the entire population from which the sample was drawn.4 By this kind of statistic, this research wanted to show that whether the result of descriptive statistic is the real result or only incidental.

B. Subject of the Research

1. Population

A population is defined as all members of any well-defined class of people, events or objects which the generalization is made. 5The population of this research was all English teachers in Al-Amin Islamic Boarding School Mojokerto. There are eight teachers who have the same quality of being English teachers in Al-Amin Islamic Boarding School. They have a college degree or national certificate in language.

3

Donald Ary, et.al., Lucy Cheser Jacobs, Christin K. Sorensen. Introduction to Research in Education Eight Edition (USA: Wadsworth Cengage Learning, 2010), 101.

4

Ibid., 148.

5

27

2. Sample

The sample is the smaller group or subset of the total population that the knowledge gained is representative of the population under study.6 This study was used purposive sampling as the sampling method. Purposive sampling, or judgment sampling, is one of sampling method which the sample elements judged to be typical, or representative of the population.7 The sample of the study was only six English teachers as the other two teachers have less experience in scoring essay test. So that this study only asked the six teachers to be the sample of this research as they have the same comprehension in scoring essay test for Al-Amin Islamic Boarding School. As the newest study about intra-rater reliability consistency, this study wanted to make the condition of collecting the data as natural as the English teachers do in usual scoring essay test.

C. Setting of the Research

1. Place

The place of the study means the location where the researcher will do the research activity. This study took place on the grade eleven of Islamic Boarding Senior High School of Al-Amin Mojokerto which is placed on RA. Basuni Street no. 18, Sooko, Mojokerto, East Java 61361.

6

Louis Cohen, et.al., Lawrence Manion, Keith Morrison. Research Methods in Education Sixth Edition (London: Routledge, 2007), 100.

7

28

2. Time

The time of the research means when the researcher will start to do the study until finish especially for collecting data. This research started from February, 20th 2016 up to April, 23rd 2016. All teachers took part in two scheduled data collection sessions:

1) One in February, 22nd 2016 (pre-scoring) 2) One in April, 18th 2016 (post-scoring)

D. Data and Source of Data

The data needed to answer the research question was the students’ essay test score in pre- and post-scoring. This research only focused on essay test which was tested in grade eleven of Al-Amin Islamic Boarding Senior High School Mojokerto as the essay material is focused on this level. The question guide of essay test was made in discussion by the English teacher of grade eleven and the researcher. So that it has adjusted with their habit in organizing essay test and made it as usual as they do their daily test. See Appendix 1.

Whereas the source of data was the English teachers’ mark of their

students’ essay test. There are for about six English language teachers; three

29

E. Technique of Data Collection and Research Instrument

Collecting data means identifying and selecting individual for a study, obtaining their permission to study them, and gathering information by asking people several questions or observing their behaviors which is formed as a collection of numbers (test scores, frequency of behaviors) or words (responses, opinions, quotes).8 This study uses documentation technique that usually involves quantitative data in the form of archival records.9 It was in the form of English

teachers’ pre- and post-essay test scoring. Actually the teachers had used some

specific scores needed to be achieved by students but it was not detail. Therefore the teachers were given a rubric to help them to be more specific in their assessment. The rubric was adapted from journal by Viphavee Vongpumivitch

entitled Classroom Writing Teacher’s Intra- and Inter-rater Reliability: Does It

Matter10 from National Tsing Hua University as the analytical rating grid that was

adjusted with the teachers’ scoring scale before. See Appendix 2.

These data collection sessions are described below.

a. PRE: From 40 essays in grade eleven, Raters were asked to score the same 10 essays for three male teachers and another same 10 essays for three female teachers. It means 20 of 40 essays were chosen randomly.

8

John W. Creswell, Educational Research….10.

9

Phyllis Tharenou, et.al., Ross Donohue, Brian Cooper. Management Research Methods….. 124.

10

30

b. POST: Two months later, all teachers as raters were given the other 10 essays again. The 10 essays consisted of 5 same essays (in pre-scoring) and 5 different essays. Like in pre-scoring, they were asked to score those essays again. Some teachers did not remember having read and scored the same essay before and others remembered having read it but they foget the specific score given.

Then a research instrument is a tool for measuring, observing or documenting the data of the research.11 The instrument was students’ answers of essay test. For the pre-score, the English teachers graded the students’ essays a day after the test. Whereas for the post-score, the English teachers gave the score on the copied of the same essays; like in the pre-score, in the next two-month.

F. Data Analysis Technique

According to Creswell, analyzing data involves drawing conclusions about it; representing it in tables, figures and pictures to summarize it, and explaining the conclusions in words to provide answers to the research question.12 As quantitative approach used, the researcher would analyze the data using statistic descriptive. The students of XI classes would be conducted the essay test. After doing the test in handwriting, the English teachers gave the essay to the researcher to be copied. Then, the researcher gave the first copy to the English

11

John W. Creswell, Educational Research …. 14. 12

31

teachers to be given the first score (pre-score). After scoring, the teachers gave the first essay copy to the researcher to make the recapitulation.

The second score (post-score) would be given in the next two months. Like the first score, the researcher gave the second copied to the teachers and they graded for the post score. Then the researcher made the second recapitulation to be analyzed and compared with the post-score.

After this step, the data was analyzed by the easiest rater reliability calculation pattern called Cronbach Alpha Coefficient (α) as an alternative procedure for calculating the split-half method reliability. It was one of analysis in descriptive statistics to summarize the intra-rater reliability consistency of English teachers at Al Amin Islamic Boarding School Mojokerto in scoring essay test.

The data was analyzed by using reliability analysis in SPSS 23 for each rater in each category of assessment. The steps were:

1. Opening SPSS 23 software;

2. Changing the name of both variables to be “PRE” and “POST”;

3. Changing the number in “Decimal” column to be 1 and choose “Scale” in

“Measure” column;

4. Putting the data in the “Variable View”;

5. Clicking “Analyze”, choose “Scale” then “Reliability”;

32

7. Choosing “Alpha” as this research used Cronbach alpha coefficient for the reliability analysis;

8. Clicking “Statistics” and check “Intraclass Correlation Coefficient”;

9. Choosing “Two-Way Mixed” in category as this study had a population of raters;

10.Choosing “Average Measures” in values as this research needed the mean for the calculation;

11.Choosing “Consistency” in reliability as this study wanted to use the subsequent values for other analyses;

12.Clicking “Continue” and “OK”.

The result of this analysis will be in the form of decimal (ex: 0.333). This measurement had to be over 0.7 before it can be concluded that the test was internally consistent.13 In addition, there were some levels of reliability that can be interpreted from the coefficient. These levels were used to identify the consistency of each rater in each category. The interpretation is described below.14

3.1 Table of Reliability Interpretation

13

Daniel Mujis. Doing Quantitative Research…. 73. 14

Therefore, the researcher put 0.00 into the reliability interpretation table above to take heed whether the result produced negative score.

The table presented two columns, the range result of Cronbach alpha

coefficient (α) and the interpretation of the number result. For instance, the range

score 0.800 < α ≤ 1.000 means that for coefficient more than 0.800 and less than

until 1.000 was interpreted as Very High reliability and so on.

After getting the finding of the intra-rater reliability consistency in Cronbach alpha coefficient, the inferential statistics was used for the next analysis step to calculate the significance level. The level of significance is the predetermined level at which a null hypothesis would be rejected.15 It means that the finding would be analyzed whether it was only incidentally or the true result

15

34

as this research was held only once. As the variables of this study were equal or the same subject, it was appropriate to use t test, especially for dependent sample; called paired t-test; to analyze it.

The paired t test was calculated by using SPSS 23. The data was imported

to each cell in “Variable View” and changed the number in “Decimal” to be 1 and

chose Scale in Measure like in analyzing the reliability before, PRE and POST. It

was calculated by clicking the “Analyze” menu, choosing “Compare Means” then

selecting “Paired-Samples T Test”. This application would show the new box and

both variables were selected and moved to “Paired Variables” box then click

“OK”. Afterwards it would present the output of the data.

The steps in analyzing the inferential statistic use paired t test were:

1. Deciding the level of significance. The most commonly used level of significance in the behavioral science is the 0.05 and the 0.01 levels.16 If the result was not significant in both levels, it would be tried in other levels such as 0.10; 0.20 or 0.50.

2. Calculating the paired t test in SPSS 23.

3. Checking the t-test with t-table and p-value “Sig. (2-tailed)” with the level of significance whether the null hypothesis was rejected or not.

G. Reliability and Validity

This research used students’ works formed essays as the instrument that was examined in pre- and post-scoring. The instrument of the research must

16

35

complete the rules of reliability and validity. The reliability of measuring instrument is the degree of consistency with which it measures whatever it is measuring.17 It means that the instrument has to produce the same result in any

kinds of measurement. As the instrument formed in essays, the instrument’s

reliability could not be measured mathematically. Nonetheless, this study tried to make the instrument reliable by keeping the data authenticity and avoiding the possibility of subjective value. There were some ways committed to keep the reliability of the data, such as:

- No changing any data (students’ work) which was scored by the English teachers in pre- and post-scoring (the same essays).

- Copying the essay answers by photocopy machine twice, one is for pre-scoring and another is for post-pre-scoring which will be given in the next two-week after the first scoring, so that the examiner scored the students’ original handwriting.

- Omitting the students’ name in each answer sheets of essay test and change it by their numbers of attendance list so that the rater did not understand whose writting it is.

Not only reliability but also validity of the research instrument is very important. Validity means the extent to which an instrument measured what it claimed to measure.18 As the daily essay examination, the question guide of essay

17

Ibid., 236.

18

36

test was made by the teacher based on the material of grade eleven’s even semester

in K-13 curriculum for basic competence 3.10 and 4.14 about exposition text for

the newest issue. Therefore, the content of students’ essays was 100% valid as

CHAPTER IV

FINDINGS AND DISCUSSIONS

This chapter discusses about the research findings and discussions. It provides the analysis and interpretation of data that had been collected to answer the research question about the consistency analysis of English teachers of Al Amin Islamic Boarding Senior High School Mojokerto in scoring essay test.

As explained in chapter III, grade eleven teachers held a weekly writing

examination; an exposition essay test to know students’ achievement in writing that

kind of text. The students wrote the essay in handwriting to keep the originality of their writing. Even it might increases the subjectivity but the researcher had kept it by

omitting the students’ name before copying and giving to the examiners or raters;

mean the teachers. Each six teachers or raters were asked to grade 10 papers of 40 essays which were chosen randomly by the researcher in pre- and post-scoring. 5 of 10 papers in post-scoring were the same essay that they had actually rated in pre-scoring. After two months interval, some teachers admitted that they did not remember about ever seen those papers before. In addition, the others said that although they remembered having ever seen the papers but they could not remember the grades that they gave.

38

Alpha coefficient as descriptive statistic analysis and paired t test as inferential statistic analysis to check the significance of the finding and the null hypothesis test in SPSS. The study found varied result for each rater.

A. Findings

1. Descriptive Statistics

The raters were asked to grade five same essays in pre- and post-scoring with two months interval and some of them did not know that they score the same essay. The others might know that but they totally forgot what score they gave to each essay. Here is the table of five essays in pre- and post-scoring based on the rubric used in the assessment.

1.1.Table of Raters’ Pre- and Post-Score of Five Same Essays

No. Essay

Content Organization Grammar Vocabulary Mechanic Total

PRE POST PRE POST PRE POST PRE POST PRE POST PRE POST

RATER 1

1st 14 10 14 10 40 35 13 10 4 3 85 68

2nd 11 11 10 10 32 30 10 11 3 3 66 65

3rd 14 14 14 14 45 44 14 12 3 4 90 88

4th 13 22 13 12 40 40 13 12 3 3 82 79

5th 13 22 12 11 32 35 11 12 4 3 72 73

40

RATER

6 2

nd

13 11.5 12.5 11.5 41 38 12.5 10 4.3 3.8 83.3 74.8

3rd 13 11.5 12.5 11.5 41 36 12.5 10.5 4.3 4 83.3 73.5

4th 12.5 13 11.5 12.5 40 43 11 12.5 3.8 4.3 78.8 85.3

5th 13 11.5 12.5 11.5 41 36 12.5 10.5 4.3 4 83.3 73.5

The table above shows the real score of English teachers’ pre- and post-scoring. The score of each category has agreed with the rubric given. Raters had meaning that the teachers who graded the essay. It was not mentioned and

explained the teachers’ identity in detail. The important one was they had

same criteria; they had gotten a degree or language certificate. In other words, the teachers were admitted having the equal capability in English. Then, the meaning of essay number was the essay identity. Even the essays were given the number randomly in pre- and post-scoring, the five same essays had been put specific sign to help the researcher in analyzing them. Therefore, it was assured that those five essays in post-scoring were the same in pre-scoring.

41

By the table above, the researcher wanted to show that each rater has already changed in almost categories of the assessment. Post-score could be higher or lower than the pre-score. There were only a few post-scores which gotten as same as the pre-score. Absolutely, it was influenced the total score of each essay. The researcher could assure that the change was not unconsciously as some of them admitted that they forgot about the score given indeed the essay.

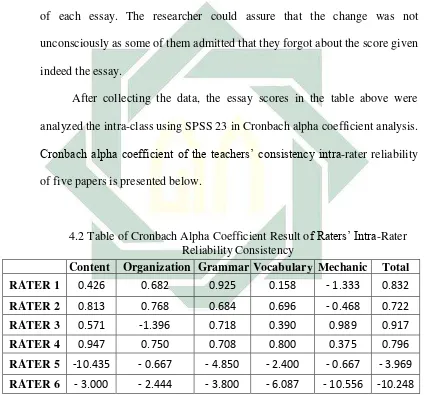

After collecting the data, the essay scores in the table above were analyzed the intra-class using SPSS 23 in Cronbach alpha coefficient analysis.

Cronbach alpha coefficient of the teachers’ consistency intra-rater reliability

of five papers is presented below.

4.2 Table of Cronbach Alpha Coefficient Result of Raters’ Intra-Rater Reliability Consistency

Content Organization Grammar Vocabulary Mechanic Total

RATER 1 0.426 0.682 0.925 0.158 - 1.333 0.832

RATER 2 0.813 0.768 0.684 0.696 - 0.468 0.722

RATER 3 0.571 -1.396 0.718 0.390 0.989 0.917

RATER 4 0.947 0.750 0.708 0.800 0.375 0.796

RATER 5 -10.435 - 0.667 - 4.850 - 2.400 - 0.667 - 3.969

RATER 6 - 3.000 - 2.444 - 3.800 - 6.087 - 10.556 -10.248

42

rater’s grammar scores of five essays were put and analyzed using SPSS

software and produced 0.684 as the result of Cronbach alpha coefficient. As the various score, each rater got different result in all categories. Unfortunately, some data produced negative value and absolutely it will be rounded into 0.00 score. Nevertheless, the researcher used this real result to analyze whether the raters were consistent or not.

To know the intra-rater reliability level of each teacher, the result of Cronbach alpha coefficient formed in numerical data analysis was interpreted based on the Reliability Interpretation presented in Data Analysis Technique subsection. The interpretation result is presented below.

4.3 Table of Raters’ Reliability Interpretation Result

Content Organization Grammar Vocabulary Mechanic Total

RATER 1 Enough High Very High Very Low Unreliable Very High

RATER 2 Very High High High High Unreliable High

RATER 3 Enough Unreliable High Low Very High Very High

RATER 4 Very High High High High Low High

RATER 5 Unreliable Unreliable Unreliable Unreliable Unreliable Unreliable

RATER 6 Unreliable Unreliable Unreliable Unreliable Unreliable Unreliable

43

the score was included in the range of 0.800 < α ≤ 1.000. Therefore, the table

above presented each result in the word.

As Table above shows, 1st rater got Very High reliability level in Grammar whereas High level in Organization. Besides, it indicated reliable Enough for Content but Very Low in Vocabulary. Mechanic was the worst as it got negative score which means that it was very unreliable. Luckily, his total score was very reliable as it got Very High level.

2nd rater was different from the 1st. Most of categories got High level, such as Organization, Grammar, Vocabulary and it might influence the Total score. The most reliable was in Content because 0.831 means it existed in

“Very High” level. In contrary, Mechanic presented unreliable as it got

negative score like the 1st rater.

Mechanic and the Total score of 3rd rater were almost perfect as it presented Very High reliability. He got High level in Grammar, reliable Enough in Content and Low level in Vocabulary. Unfortunately, it was the same as two raters before that they have minus value in one of their categories which means unreliable, this rater was in Organization.

44

was the lowest reliability level as it got .375 score and it was still in positive value.

The worst unreliable raters are 5th and 6th raters. All of their categories presented negative value. It can be said that they got 0.000 score or were admitted as zero reliability. Simply, it was regarded that they were included in inconsistent or unreliable level.

As the various marks gotten, it was needed to make the average of all grades so that it could conclude the result which represented and covered all raters in all categories. Here is the table of average result. The table shows the average result of pre- and post-scoring of five same essays. For example, the pre-scoring of 1st rater was the average result from all pre-scoring in all categories and so was the post-scoring.

4.4 Table of All Essays’ Average Results

No. Essay PRE POST

1st 81.8 77.4

2nd 75.8 72.9

3rd 83 80.7

4th 81.9 81.5

that English teachers or raters had good reliability consistency. Even there are two raters got inconsistent in all categories but it did not influence other results that affect the average.

4.5 Output of Intraclass Correlation in Cronbach Alpha Coefficient of SPSS 23

Intraclass Correlation Coefficient

Intraclass Correlationb

95% Confidence

Interval F Test with True Value 0

46

2. Inferential Statistics

After getting the result of descriptive statistics, the finding would be checked the significance by using inferential statistics in paired t-test of SPSS 23. The value checked was not the whole result but it was only the average result as it has covered all values of all raters in pre- and post-scoring. This is the result of paired t test in SPSS 23.

4.6 Output of Paired T Test Result Of Average Result in SPSS 23

47

The first table is Paired Sample Statistics that showed the statistic summary of pre- and post-scoring. The table provides that the average score in pre-scoring was 80.360 and in post-scoring was 78.020. It indicated reduction for about 2.340. The standard deviation presented the data variation in each variable, that in pre-scoring was 2.887 and in post-scoring was 3.394. Also N was the number of data which there were five essays graded twice by raters in two-week interval.

Paired Sample Correlation showed the correlation between two variables that produce 0.902 with 0.036 for the significance. It means that the correlation between pre- and post-scoring was so related.

The last is Paired Sample Test. It can be interpreted as:

Hypothesis

H0 = the intra-rater reliability of English teachers at Al-Amin Islamic

Boarding School Mojokerto in scoring essay test is not consistent. H1 = the intra-rater reliability of English teachers at Al-Amin Islamic

Boarding School Mojokerto in scoring essay test is consistent.

Significance level

Sig = 0.05

Critical area

Based on t-test:

48

Accept H0 = t-test < t-table (5%, N-1)

Based on p-value (Sig.):

Reject H0 = p-value < 0.05

Accept H0 = p-value > 0.05 Decision

t-test = 3.541 > t-table (5%, N-1) = 2.776; Sig. = 0.02 < 0.05;

means H0 is rejected.

The intra-rater reliability of English teacher at Al-Amin Islamic Boarding School Mojokerto in scoring essay test was consistent.

B. Discussion

49

inconsistent in Organization. Unfortunately, the fifth and sixth teacher or rater seemed to be the least consistent. Their ratings were abysmal in all categories. In fact, they even contradicted in their own ratings in the pre-scoring so that the coefficient is negative.

In order to be easy in taking the conclusion, all various results were taken the average and calculated in Cronbach alpha coefficient. Based on the reliability interpretation, it produced Very High consistency as it got .924 of intraclass correlation in SPSS 23. This value was more than 0.7 as the standard of Cronbach alpha coefficient in deciding the reliability. Simply, it was proved that English teachers of Al-Amin Islamic Borading School Mojokerto had good reliability. In addition, paired t-test result as the significant calculation of inferential statistic also qualified the rules of rejecting the null hypothesis. The rules are: 1) t-test was more than t-table; 3.541 > 2.776 and 2) Sig. = 0.02 was less than 0.05 as the level of significant. It means that the result was the real score, not incidentally. Even there were two raters got inconsistent or unreliable in all categories but it did not give any impact to the calculation which proven that the intra-rater reliability of English teachers at Al-Amin Islamic Boarding School Mojokerto was internally consistent. It can be said that the inconsistent scores gotten happened by chance

with many exceptions from the raters’ self that can be investigated in the next

research.